Database grooming for System Center Operations Manager 2007

This excerpt from "System Center Operations Manager 2007 Unleashed" takes you through the process of grooming your operational, data warehouse and ACS databases in SCOM 2007

|

This chapter excerpt from System Center Operations Manager 2007 Unleashed, by Kerrie Meyler, Cameron Fuller, John Joyner and Andy Dominey, is printed with permission from Sams Publishing, Copyright 2008. Click here for the chapter download or purchase the entire book here. |

Part of maintaining the integrity of your database environment is managing data retention for your Operational and Data Warehouse databases. Data retention also affects the size of the database and the amount of data to back up, which affects your backup requirements and scheduling.

Grooming the Operational Database

The OpsMgr2007 Operations console includes the ability to modify data retention settings for the Operations database under Administration → Settings → General → Database Grooming. The default setting for each of the data types is to remove or groom the data after seven days (see Figure 12.1). After the data is groomed, it is not recoverable unless it was previously backed up.

Within the Operational database, the p_partitioningandgrooming stored procedure runs automatically at midnight to perform the grooming. To run grooming manually, execute this procedure, which calls a series of other stored procedures that use your database grooming settings to perform the grooming.

FIGURE 12.1

Grooming the Data Warehouse Database

The Operations console does not have a graphical interface to modify data retention settings for the data warehouse. You can groom the data warehouse settings by modifying columns in certain tables inside the OperationsManagerDW database. Data is groomed out at different intervals depending on the degree of aggregation. Data is stored by type, and the ranges for data retention vary from 10 days to 400 days by default, depending on the type of data.

Grooming Settings

Microsoft stores grooming-related settings in two areas in the MaintenanceSetting table in the Data Warehouse database:

- Instance space — Discovered objects with their properties and relationships.

- Config space — This is space that contains information about your management packs, rules they contain, overrides you have created, and so on.

Columns of interest and their default values are shown in Tables 12.3 and 12.4.

Using the default settings for the config space, a sealed management pack is removed 400 days after it was uninstalled from all management groups that are members of the data warehouse! This is also true for non-sealed management packs, but OpsMgr retains up to three old versions of a non-sealed management pack as well.

TABLE 12.3 MaintenanceSetting Table Instance Space Settings

| Column | Value |

| LastInstanceGroomingDateTime | The last time grooming operations were performed |

| InstanceGroomingFrequencyMinutes | Frequency of the grooming process start in minutes (default: 480) |

| InstanceMaxAgeDays | Maximum age (since the day the instance was deleted) for the instance space objects (default: 400) |

| InstanceMaxRowsToGroom | Maximum number of objects to delete in one run (default: 5000) |

TABLE 12.4 MaintenanceSetting Table Config Space Settings

| Column | Value |

| LastConfigGroomingDateTime | The last time grooming operations were performed |

| ConfigGroomingFrequencyMinutes | Frequency of the grooming process start in minutes (default: 60) |

| ManagementPackMaxAgeDays | Maximum age for the management pack (since the day MP was uninstalled) (default: 400) |

| NonSealedManagementPackMaxVersion Count | Maximum number of non-sealed MP versions to preserve (independent of age) (default: 3) |

Data Retention

Settings controlling data retention are located in the StandardDatasetAggregation table. You can view the grooming settings by running the following SQL query:

SELECT AggregationIntervalDurationMinutes, BuildAggregationStoredProcedureName, GroomStoredProcedureName, MaxDataAgeDays, GroomingIntervalMinutes, MaxRowsToGroom FROM StandardDatasetAggregation

Table 12.5 displays the default settings returned by the SQL query.

The following applies to the results shown in Table 12.5:

- The first column is the interval in minutes that data is aggregated. NULL is raw data, 60 is hourly, and 1440 is daily.

- MaxDataAgeDays is the maximum number of days data is retained. Depending on the type of data and its degree of aggregation, defaults can range from 10 to 400 days.

- GroomingInterval Minutes is the grooming process frequency. Performance, Alert, Event, and AEM data is groomed every 240 minutes (4 hours); State data is groomed every hour.

TABLE 12.5 Data Returned from StandardDatasetAggregation Table

| AggregationInterval DurationMinutes |

BuildAggregation StoredProcedureName |

GroomStored ProcedureName |

MaxData AgeDays |

Grooming IntervalMinutes |

MaxRows ToGroom |

| NULL | NULL | EventGroom | 100 | 240 | 100000 |

| NULL | NULL | AlertGroom | 400 | 240 | 50000 |

| NULL | NULL | StateGroom | 180 | 60 | 50000 |

| 60 | StateAggregate | StateGroom | 400 | 60 | 50000 |

| 1440 | StateAggregate | StateGroom | 400 | 60 | 50000 |

| NULL | AemAggregate | AemGroom | 30 | 240 | 100000 |

| 1440 | AemAggregate | AemGroom | 400 | 240 | 100000 |

| NULL | PerformanceAggregate | Performance Groom | 10 | 240 | 100000 |

| 60 | PerformanceAggregate | Performance Groom | 400 | 240 | 100000 |

| 1440 | PerformanceAggregate | Performance Groom | 400 | 240 | 100000 |

To make sense of the grooming settings in this table, look at non-aggregated Event data, which is the first row of information in Table 12.5. We know that this pertains to Event information because of the referenced procedure name EventGroom (GroomStoredProcedureName). The information returned from the query tells us that Event data is not aggregated (AggregationIntervalDurationMinutes=NULL) and is saved for 100 days (MaxDataAgeDays). The EventGroom stored procedure grooms data (GroomStoredProcedureName), and runs every 240 minutes/4 hours (GroomingIntervalMinutes). Each time the stored procedure runs, it will groom a maximum of 100,000 rows.

You can use the following SQL code to change the grooming frequency for each type of data:

USE OperationsManagerDW

UPDATE StandardDatasetAggregation

SET MaxDataAgeDays = <number of days to retain data>

WHERE GroomStoredProcedureName = '<procedure name>' AND

AggregationIntervalDurationMinutes = '<aggregation interval duration>'

Go

Datasets

The data itself is retained by data type. Each data type is stored in a separate structure, called a dataset. Examples of these datasets include a performance dataset for performance data, a state dataset to monitor state transitions, an event dataset for events, and so on.

Management packs may also introduce new datasets. All datasets in existence—known today—are referred to by Microsoft as standard datasets. Microsoft maintains a set of tables for standard datasets that hold a description of the dataset including its data retention policies. A non-standard dataset does not have to follow the same rules; data retention settings for non-standard datasets are dataset specific.

Data retention for the standard dataset is set at the aggregation level, meaning that performance raw data (the samples themselves) is stored a certain number of days. The number of days may differ from the number of days the daily aggregates are stored for performance counters. These settings are stored in the StandardDatasetAggregation table, shown in Table 12.5 in the previous section.

The primary key for the StandardDatasetAggregation table is composite and consists of the database ID (from the Dataset table) and the Aggregation TypeID (from the AggregationType table). Default values will vary by dataset/aggregation type. The aggregation types, defined in the AggregationType table (which consists of the AggregationTypeID, AggregationTypeDefaultName, and AggregationTypeGuid columns), are as follows:

- 0—Raw data

- 10—Subhourly aggregation

- 20—Hourly aggregations

- 30—Daily aggregations

For performance reasons, data is not always groomed row-by-row. If the data inflow is high (typically the case for medium and large organizations for performance and event data), the Data Warehouse database uses additional tables to store data. This makes the grooming process (database row deletes) more efficient, as an entire table can be deleted rather than individual rows.

As an example, ten million performance samples are stored in the first instance of a table. After ten million records, OpsMgr creates a new table that holds the additional data and calculates a minimum and maximum date for the data in the first table. This information is stored separately in the StandardDatasetTableMap table. Grooming looks at this table to determine what data exists in each table and grooms accordingly. For OpsMgr 2007 SP 1, Microsoft has announced a ResKit tool named DWDATARP that allows you to view and set the data retention policies for all configured datasets.

The logic used by the grooming process is as follows:

- For a certain dataset/aggregation type combination, check to see if there is only one table in the data warehouse.

- If there is just one table, delete records row-by-row using the DELETE TOP SQL statement and MaxRowsToGroom parameter from the StandardDatasetAggregation Table.

- If there is more than one table, find the table with the oldest Maximum Date for data in it. If this date is older than the retention period, drop the entire table; otherwise, do not delete any rows.

The implication of following this process is that the data warehouse may not always be "current" on grooming. When the data in a table spans a month, some records are kept one cycle or month longer than necessary. However, the performance gains of dropping an entire table versus performing individual row deletes in SQL Server is enormous, so storing the data a little longer seems a reasonable tradeoff. Because report selection criteria includes a time period, any additional data is not visible to the user.

| NOTE |

| How Is Grooming Actually Performed? There are separate stored procedures to groom different types of data such as Performance, State, Alerts, Events, AEM data, and so on. The GroomStoredProcedure- Name column in Table 12.5 specifies the grooming procedures used for the data warehouse. You can use the standarddatasetgroom stored procedure in the data warehouse database to trigger grooming to happen manually. The procedure uses a parameter, datasetid. This value, listed in the dataset table, represents the type of data to be acted on. Steve Rachui documents this here. OpsMgr will call the standarddatasetmaintenance stored procedure to execute standarddatasetgroom. |

As OpsMgr aggregates most of the data in the Data Warehouse database, its growth on a day-to-day basis is less than the Operational database. However, since the retention period is longer, it will grow to be considerably larger.

Data Warehouse Backup Considerations

Because the Data Warehouse database has longer data retention periods, it can grow to be quite large, although it is initially smaller than the Operational database. Large databases can present potential backup issues. A terabyte database, as an example, can take a long time to back up and restore.

One approach is to create archived or segmented versions of the Data Warehouse database, separating it by different months, quarters, or years, depending on its size and your reporting requirements. This portioning gives you granularity in backups—once a database is archived, it does not have to be backed up on a regular schedule. It also makes potential restore operations quicker.

A sophisticated backup schedule that accommodates archive databases would back up the current data warehouse (OperationsManagerDW) but retain online copies of archived versions. As you backed up each archived database when it was current, you would simply maintain those tapes in long-term storage as long as required for reporting purposes. Segmenting the reporting information allows you to reduce the volume of data backed up on a daily basis while maintaining long-term availability of the data using archived databases and long-term tape storage.

There are (of course!) several caveats to this:

- Adjusting the grooming settings (discussed in the "Grooming the Data Warehouse Database" section of this chapter) to groom only at the end at your designated archival period.

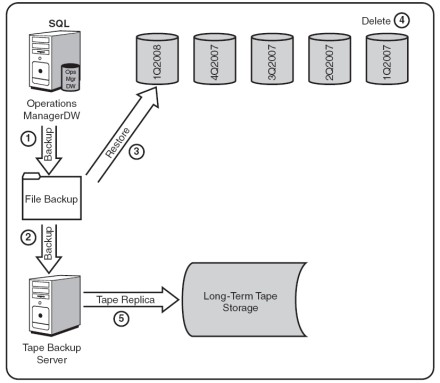

- Administrative overhead in managing the backup process at the end of each retention period. The end-of-period backup process adds complexity, which we illustrate in Figure 12.3.

- Tailoring your reports to run against archived data as necessary.

For purposes of illustration, consider a company monitoring 2,000 servers. For simplicity's sake, let's assume the company does not have third-party software with a SQL backup agent and uses Microsoft SQL Server's backup capability for its database backups. The company needs access to a year's worth of data. We can use the formula discussed in Chapter 4, "Planning Your Operations Manager Deployment," to determine the amount of space required for the data warehouse. Plugging these numbers into the formula (3 MB/day x Number of Agents x Retention Days) + 570 MB = Data Warehouse size gives a Data Warehouse database of nearly 2.2 terabytes (TB), which is too large to easily back up directly to tape. In addition, backup to a disk file requires equivalent storage on disk for the backup file, for a total of over 4TB. This is also too much storage for practical operations.

However, data for a single quarter will be just over 500 gigabytes (GB) or one-half terabyte. This amount is within the capability of the tape backup system. The company decides to break up the data warehouse into quarterly archives and accordingly sets the data warehouse grooming to groom data after each quarter (120 days). This configuration has been running for more than a year, so they have a steady state condition.

Figure 12.2 illustrates the backup process. You can see that the current data warehouse is available (OperationsManagerDW), as well as the four previous quarters of archived data (4Q2007, 3Q2007, 2Q2007, and 1Q2007). The process consists of two steps:

- Perform an online backup of the data warehouse to a disk file.

- Back up the backup disk file to tape. In the event of a disaster, the tape backup can be easily restored.

FIGURE 12.2

You can perform this backup process weekly, daily, or at whatever period meets your business requirements, with the procedure remaining the same. The amount of disk storage required is based on the size of the databases, which is based on the data captured by the agents. Calculating size for a 500GB database with one quarter of data, the company will need disk storage to hold five databases (5 x 500GB), plus an additional 500GB for the file backup that is archived to tape. This is a total of 3,000GB (3TB). You can contrast this figure with the original 4TB-plus storage requirement and see we have also conserved on disk storage! We also only need to back up 500GB at a time, rather than 2TB; making the backup operation more efficient.

Procedures are a bit more complex for the end-of-quarter backup process, shown in Figure 12.3. The following steps outline the process of transitioning at the end of 1Q2008:

- First, the data warehouse is backed up to a disk file. This is an online SQL Server backup, so there is no interruption in availability.

- Next, the backup file is copied to tape. In the event of a disaster, this tape backup can easily be restored.

- The backup file is restored to a new SQL Server database storing data for that quarter (in this example, 1Q2008).

- The database now outside the one-year data retention requirement (1Q2007) is deleted.

- The tape backup of the data warehouse in Step 2 (1Q2008) is replicated for longterm tape storage.

FIGURE 12.3

These processes can be automated scripts and jobs, or you can run them manually as endof- period procedures. The overall process is flexible and can be adjusted to support monthly archives rather than quarterly. The advantage of using monthly archives is that the backups are correspondingly shorter, but the report horizon will be shorter and only cover a single month. You could also extend this process to occur every 6 or 12 months! If it becomes necessary to query data in an archive, you could restore the archived backup as a database file and change the data source in SQL Reporting Services to point to that specific database.

This process is outside of any mechanisms designed or supported by Microsoft.

Grooming the ACS Database

Data is groomed out of the ACS database based on the data retention period specified during setup, with the default being 14 days. The ACS collector calls a SQL procedure to remove a partition that is outside of the data retention period. This procedure can be found on disk at

%SystemRoot%/system32/Security/AdtServer/DbDeletePartition.sql. The data retention period itself is specified in the dtConfig table in the Operations- ManagerAC database.

To update the data retention period, run the following SQL query:

USE OperationsManagerAC

Update dtConfig

SET Value = <number of days to retain data + 1> WHERE Id = 6

To retain 7 days of data, set <Value> = 8. Data is accumulated at approximately 7.6MB per day per workstation.

Further ACS database sizing information is available in Chapter 15.

![]() SCOM 2007 BACKUP AND RECOVERY

SCOM 2007 BACKUP AND RECOVERY

![]() HIntroductin

HIntroductin

![]() TiRoles of key SCOM files and databass

TiRoles of key SCOM files and databass

![]() TiEstablishing a backup schedue

TiEstablishing a backup schedue

![]() Tip 3: Database grooming

Tip 3: Database grooming

![]() TiBacking up the RMS encryption kes

TiBacking up the RMS encryption kes

![]() TiBacking up management pacs

TiBacking up management pacs

![]() TiBacking up repors

TiBacking up repors

![]() Tip 7: Disaster recovery planning

Tip 7: Disaster recovery planning