Use PowerShell workflows when performance matters

When time is of the essence, add parallel processing to your PowerShell scripts to perform tasks more efficiently by using the workflows feature in the automation tool.

With the release of Windows PowerShell 3.0 in 2012, Microsoft added a unique feature that further enhanced the automation capabilities of the management tool called workflows.

Most scripting languages perform tasks in a sequential manner. Even if you include loops, the code runs from one command to the next. PowerShell workflows get around this limitation by letting tasks run in parallel, which administrators might find particularly useful when automating the same commands across multiple Window Servers or desktop machines. PowerShell workflows have several other perks, such as resuming after reboot of the target or local machines, adding checkpoints to resume a workflow if it's interrupted, and suspending and resuming a workflow that runs as a PowerShell job.

Explore the capabilities of PowerShell workflows

Why would you want to perform tasks in parallel? The answer is speed. For instance, you might need to perform a task on several machines, but you don't have enough time to sequentially work through the list of machines. Alternatively, there might be long stretches of time during which your code waits for something to happen; performing those waits in parallel reduces the overall time it takes to complete the set of tasks.

PowerShell workflow lets your code survive a reboot on the target machine, which means you don't need to keep testing to determine if the machine is available to restore connectivity. It happens automatically.

One danger with a long-running script is that a problem that occurs before the script finishes will lose the earlier results. Adding checkpoints to the workflow will save the current state of the workflow, including results, to disk to reduce the risk of losing data through a failure.

Suspending and resuming workflows gives the option to pause the workflow and use the resources for other things if a higher priority task occurs, such as a server or application failure that needs to be addressed immediately.

These benefits make it sound ideal, so why aren't all scripts using PowerShell workflows? Unfortunately, there are two severe drawbacks to this feature: it's harder to code workflows and the feature is absent from PowerShell Core.

Coding PowerShell workflows

The first problem with using PowerShell workflows is that coding them is more complex than standard PowerShell scripts or functions:

- You need to explicitly state you want tasks performed in parallel.

- Workflows use workflow activities rather than cmdlets.

- Not all cmdlets are available as activities, so you need to create an InlineScript to use those cmdlets not available as activities.

- Parameter names can change between cmdlets and workflow activities -- for example, ComputerName becomes PSComputerName.

- Variable scoping is different depending on the use of an InlineScript or not.

The following script highlights these points:

$cred = Get-Credential -Credential manticore\richard

workflow ppdemo5 {

param ($credential)

$computers = Get-VM -Name W19* | Where-Object Name -ne 'W19ND01' |

Sort-Object -Property Name |

Select-Object -ExpandProperty Name

foreach -parallel ($computer in $computers){

<#

Invoke-Command isn't activity so has to

be inside an inlinescript block

#>

inlinescript {

$count = Invoke-Command -ScriptBlock {

Get-WinEvent -FilterHashtable @{LogName='Application'; Id=2809} -ErrorAction SilentlyContinue |

Measure-Object

} -VMName $using:computer -Credential $using:credential

$props = [ordered]@{

Server = $count.PSComputerName

ErrorCount = $count.Count

}

New-Object -TypeName PSObject -Property $props

}

}

}

ppdemo5 -credential $cred | Select-Object Server, ErrorCount

The code starts by defining a credential. The PowerShell workflow runs on a non-domain machine, which requires a credential to access domain machines. This isn't a workflow-specific issue.

The keyword workflow defines the workflow, which takes the credential as a parameter. Because the script is designed to run on a Hyper-V host, we use the Get-VM cmdlet to access the required VMs. Only the name of the machine is used.

The machines will be accessed in parallel because of the use of the -parallel option on foreach. This option is only available in PowerShell workflows. However, Invoke-Command is one of the cmdlets for which a workflow activity wasn't created, so an InlineScript block needs to be wrapped around the code. Not every cmdlet works in a workflow, but using InlineScript gets around this issue. The contents of each InlineScript block generated by for each loop will be processed in parallel.

Because the values for the -VMname and -Credential parameters of the Invoke-Command cmdlet are supplied variables that are defined outside of the InlineScript block, you need to use the scope modifier to successfully access the correct values.

The results of the Invoke-Command call -- counting the number occurrences of a particular event -- are used to populate the properties of the output object.

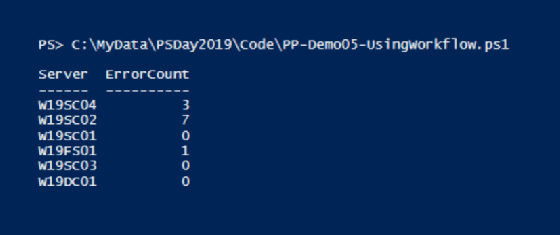

The final line of the code runs the workflow and selects the required properties to display. The results of the workflow are shown in Figure 1.

Using a workflow in this scenario -- which is valid if you have a large number of machines to access -- involves a lot of coding complexity and overhead to support a relatively small payload.

Workflows removed from PowerShell Core

The second major issue with using workflows is you can't use them in the open source PowerShell Core, which runs on .NET Core that does not offer the underlying workflow functionality. There are no plans to reverse this decision as far as we're aware.

So what options do you have in PowerShell Core instead of workflows?

There is no mechanism built into PowerShell Core for using checkpoints in your scripts. The only way to manage this would be to log the script's progress. For instance, when working with multiple servers, you could create a log entry as each server is successfully processed. If a problem occurs, then you would know where to restart the script. When working in parallel, you need to think about how you can have multiple tasks writing to the log.

The suspend and resume options with workflows are tied to running the workflow as a job and can't be duplicated at this time.

It's not all doom and gloom. There are several options to perform parallel processing in PowerShell Core. We're going to use PowerShell 7.0 as the basis for the discussion because it presents an interesting option.

PowerShell 2.0 introduced background jobs, which provide processing isolation for each job. This can lead to issues on the machine that runs the jobs if the resource usage, especially memory and CPU, get too high. You'll have to ensure your code can extract the data from the jobs and manage the jobs as they complete.

Closely related to standard background jobs are thread jobs, introduced in PowerShell Core 6.2. Each thread job runs in a separate thread rather than a separate process, which uses fewer resources compared to standard background jobs. However, because the jobs run in a single process, it's possible that a problem in one job could crash the process and affect the other running jobs. Thread jobs are managed with the standard job cmdlets.

The alternative to jobs is runspaces by using the appropriate .NET classes to create a set of PowerShell instances that run in parallel. The advantage is you'll develop an efficient and quick solution. The disadvantage is developing the code is hard, and the maintenance could be an issue. The PoshRSjob module from the PowerShell Gallery simplifies the use of runspaces by providing a set of functions that are analogous to the job cmdlets. You work with background jobs that use runspaces.

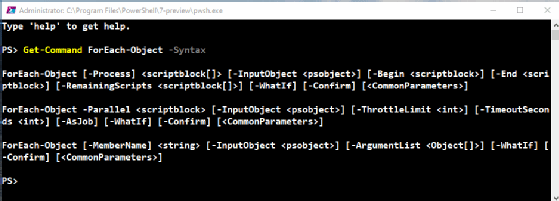

The PowerShell team added an interesting option in the release of PowerShell 7.0 with a Parallel keyword added to the ForEach-Object cmdlet, as shown in Figure 2.

The scriptblock attached to the -Parallel parameter executes once for each value on the pipeline as you would expect. When you specify -Parallel, the cmdlet uses runspaces to perform that processing in parallel.

Our workflow example from earlier becomes the following.

Get-VM -Name W19* | Where-Object Name -ne 'W19ND01' |

Sort-Object -Property Name |

Select-Object -ExpandProperty Name |

ForEach-Object -Parallel {

$count = Invoke-Command -ScriptBlock {

Get-WinEvent -FilterHashtable @{LogName='Application'; Id=2809} -ErrorAction SilentlyContinue |

Measure-Object

} -VMName $psitem -Credential $using:cred

$props = [ordered]@{

Server = $count.PSComputerName

ErrorCount = $count.Count

}

New-Object -TypeName PSObject -Property $props

}

The script retrieves the names of the required VMs and pipes them into ForEach-Object. The code inside the ForEach-Object scriptblock is similar to the code used in the workflow but without the complexity of needing an InlineScript block or other workflow-related issues.

By default, ForEach-Object -Parallel has a limit of five runspaces in use at one time. You can adjust this with the ThrottleLimit parameter to control the number of concurrent runspaces. Adding more runspaces uses more resources, so experiment carefully with this parameter.

You can use the -AsJob parameter to wrap the runspaces in a single job if you want the processing to occur in the background.

ForEach-Object is currently a feature of PowerShell 7 and later as well as PowerShell Core.

Editor's note: This article was republished after a technical review and light updates.